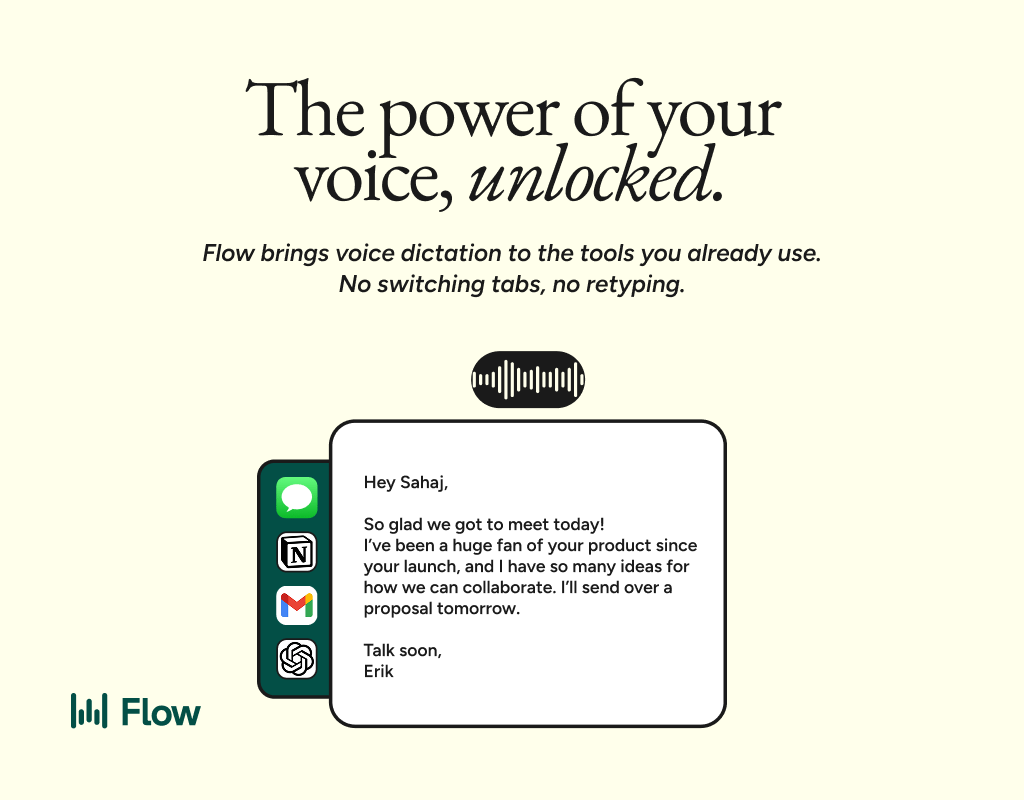

This issue is brought to you in partnerships with Wispr Flow. Voice-to-text AI that turns speech into clear, polished writing in every app you use. It's 4x faster than typing and works everywhere, from Slack to your CRM.

Clicking the sponsor links below helps fund the newsletter and keep these insights coming.

Better prompts. Better AI output.

AI gets smarter when your input is complete. Wispr Flow helps you think out loud and capture full context by voice, then turns that speech into a clean, structured prompt you can paste into ChatGPT, Claude, or any assistant. No more chopping up thoughts into typed paragraphs. Preserve constraints, examples, edge cases, and tone by speaking them once. The result is faster iteration, more precise outputs, and less time re-prompting. Try Wispr Flow for AI or see a 30-second demo.

Marketing teams are shipping more content than ever. Personalization is happening at scale. Response times are faster. Output is up across the board. The tools are working exactly as promised.

And yet, trust is collapsing.

Not internally. Internally, AI feels like a win. Efficiency is up. Costs are down. Teams are moving faster. But on the other side of the screen, customers are pulling back. They're skeptical. They're tuning out. They're starting to assume everything they see is generated, optimized, and soulless.

The gap isn't about capability anymore. It's about belief. And it's widening.

🍿 The Snack

Your marketing team's efficiency gain is creating a customer trust deficit.

AI has made it easier than ever to produce content, personalize outreach, and automate engagement. But the more we automate, the less customers believe what we're saying. The tools that help us move faster are the same ones making our audience slower to trust us.

This isn't a tool problem. It's a perception problem. And it's showing up in ways most teams aren't measuring yet: longer consideration cycles, lower engagement on "polished" content, and a growing assumption that if it looks too good, it probably isn't real.

The brands that win in 2026 won't be the ones producing the most content. They'll be the ones rebuilding trust in an environment where belief has become the bottleneck.

What's Actually Happening

Marketers are all in on AI. 83% of advertising executives now say their company uses AI in the creative process, up from 60% just a year ago. Cost efficiency has become the top benefit, cited by 64% of teams. AI is being used to write copy, generate images, personalize emails, and produce video at scale.

Meanwhile, 71% of Gen Z and Millennial consumers say they've seen an AI-generated ad. And their reaction isn't enthusiasm. It's caution.

Only 45% of these consumers feel positive about AI-generated advertising. That's down slightly from last year, even as usage has exploded. More concerning: 82% of advertisers believe consumers feel positive about AI ads. The perception gap has widened from 32 points to 37 points in a single year.

Gen Z is leading the skepticism. 39% now feel negative about AI-generated ads, nearly double the rate among Millennials. They're more likely to describe brands using AI as "inauthentic," "disconnected," and "unethical." These aren't fringe opinions. They're becoming the default.

And it's not just ads. It's everything. Customers are encountering AI-generated content across email, social, support interactions, and product pages. The volume is overwhelming. The quality is inconsistent. And the result is a blanket assumption: if it's polished and fast, it's probably not real.

Why This Matters More Than It Looks

This isn't a temporary reaction to a new technology. It's a structural shift in how trust forms.

For decades, trust in marketing was built on consistency, quality, and human judgment. Customers assumed someone made a decision about what to say and how to say it. That assumption is breaking.

Now, customers assume the opposite. They assume automation. They assume optimization. They assume no one is actually thinking about them specifically. And that assumption changes everything.

It changes how they read emails. It changes how they evaluate ads. It changes how they interpret personalization. What used to feel thoughtful now feels algorithmic. What used to feel relevant now feels surveilled.

The second-order effect is even more dangerous: customers are starting to distrust all brand communication, not just the AI-generated stuff. When you can't tell what's real and what's automated, the safest assumption is that none of it is real.

This shows up in longer sales cycles. It shows up in lower engagement rates on content that should be performing. It shows up in customers asking more questions, demanding more proof, and defaulting to skepticism even when the message is good.

The cost isn't just in conversion rates. It's in the erosion of the relationship itself. Trust used to be an asset you could build over time. Now it's a liability you have to actively manage every time you hit send.

Where Most Teams Go Wrong

Most teams are solving for the wrong problem.

They see declining engagement and assume the content isn't good enough, so they produce more of it. They see longer sales cycles and assume the messaging isn't clear enough, so they personalize harder. They see skepticism and assume the creative isn't compelling enough, so they optimize further.

All of this makes the problem worse.

The issue isn't volume. It's belief. Customers don't need more content. They need a reason to trust the content they're already seeing.

But instead of addressing trust, teams double down on tactics. They A/B test subject lines. They refine targeting. They automate follow-ups. They treat the symptoms without diagnosing the disease.

Another common mistake: using AI to cut costs instead of improving quality. 64% of advertisers now cite cost efficiency as the top benefit of AI, up from being ranked fifth last year. That shift is telling. It means teams are using AI to do more with less, not to do better.

Customers can feel the difference. When AI is used to replace human judgment instead of augment it, the output feels hollow. It's grammatically correct but emotionally flat. It's personalized but not personal. It checks the boxes without creating connection.

The other trap is assuming disclosure doesn't matter. Less than half of advertisers always disclose when AI is used, even though 73% of consumers say knowing an ad was AI-generated would either increase or have no impact on their likelihood to purchase. Teams are leaving trust on the table because they're worried about a backlash that the data says isn't coming.

What to Do Instead

Start by understanding your audience's relationship with AI. This isn't one-size-fits-all. Gen Z is significantly more skeptical than Millennials. B2B buyers have different expectations than B2C consumers. Healthcare and financial services audiences demand more transparency than entertainment audiences.

If you're targeting Gen Z, assume skepticism and plan accordingly. Don't lead with automation. Lead with authenticity. Use AI to support the work, not replace the human behind it. Be ready to explain why you're using AI and what it's helping you do better.

Next, use AI to raise quality, not just increase output. The brands that win will be the ones using AI to make better decisions, not faster ones. That means using AI for research, insight generation, and creative exploration, then applying human judgment to what actually ships.

If AI is helping you write faster but the writing isn't better, you're using it wrong. If it's helping you personalize at scale but the personalization feels generic, you're optimizing for the wrong metric. Quality has to be the filter, not speed.

Disclosure matters more than most teams think. When AI creates material risk that customers will be misled about what they're seeing, hearing, or interacting with, disclose it. This is especially true for video and images, where 56% and 54% of consumers, respectively, want transparency.

Disclosure doesn't hurt trust. It builds it. Customers aren't asking for perfection. They're asking for honesty. When you're upfront about using AI, you signal that you respect their intelligence and their right to make informed decisions.

Finally, measure trust, not just engagement. Track how customers describe your brand. Monitor sentiment in support conversations. Pay attention to the questions prospects are asking and the objections they're raising. If trust is eroding, you'll see it in the language people use before you see it in the metrics.

The goal isn't to avoid AI. It's to use it in ways that strengthen belief instead of undermining it. That requires different decisions, different priorities, and a willingness to move slower in the short term to build something that lasts.

The irony is hard to miss.

We built AI to help us connect with customers at scale. And in doing so, we've made it harder for customers to believe the connection is real.

The tools aren't the problem. The assumptions are. We assumed efficiency would lead to better outcomes. We assumed personalization would feel personal. We assumed customers would keep up with our pace of change.

They didn't. And now we're in a moment where the thing helping us move faster is the same thing making our audience slower to trust us.

The answer isn't to stop using AI. It's to stop using it carelessly, to recognize that every automated message, every generated image, every optimized interaction is either building belief or eroding it. And right now, for too many teams, it's doing the latter.

Trust isn't a feature you can automate. It's a relationship you have to earn. And in 2026, that's going to require more intention, more transparency, and more human judgment than most teams are currently applying.

The gap is real. But it's not permanent. The teams that close it will be the ones that remember what they're actually optimizing for: not output, but belief.

Stay Hungry,